In enterprise IT, a false positive is typically a minor nuisance that is resolved by a simple exclusion rule. However, when Microsoft Defender flags a foundational component of internet security as a Trojan, the situation escalates into a critical failure. Over the past 24 hours, security teams worldwide have managed a widespread misclassification event involving DigiCert, a major Certificate Authority (CA). This incident underscores the risks inherent in automated heuristic engines when they misidentify trusted infrastructure as malicious.

The Anatomy of a False Positive Storm

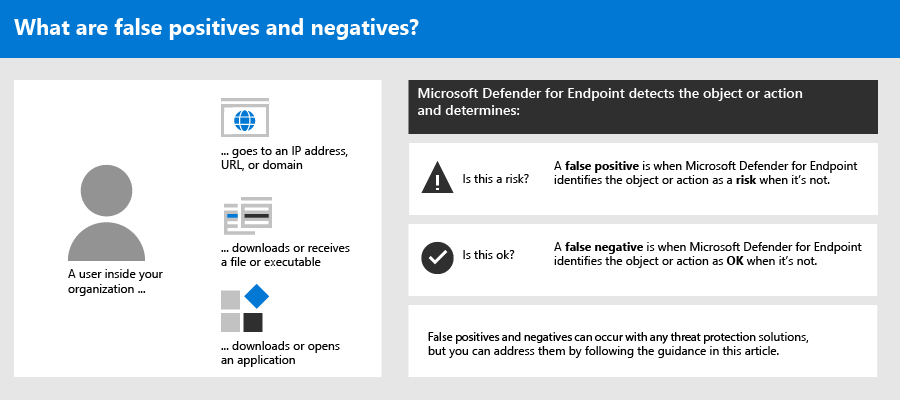

The issue began when Microsoft’s behavioral analysis engines started flagging legitimate DigiCert binaries—specifically those used for certificate management—as threats. Reports from various organizations indicate that Defender labeled these files as Trojan:Win32/Wacatac or other generic malware. Wacatac is a heuristic label Defender applies when it detects suspicious behavior; in this instance, the engine incorrectly identified standard administrative functions as malicious activity.

This event represents a collision between two essential pillars of digital infrastructure. DigiCert provides the cryptographic foundation for websites, VPNs, and internal corporate services. Because Microsoft Defender is integrated into Windows enterprise environments, flagging these tools as malware created an immediate denial-of-service scenario. The security software effectively disabled the very tools required to maintain network security, forcing IT departments to halt operations to restore access to critical binaries.

Heuristics vs. Reality: Why the Engine Tripped

Modern Endpoint Detection and Response (EDR) systems have moved beyond static hash matching, utilizing cloud-based machine learning to interpret the “intent” of a file. If software interacts with system certificates or modifies registry keys in a manner that matches a known malicious pattern, the engine triggers an alert. The difficulty arises when legitimate management utilities perform actions that mirror the behavior of sophisticated spyware.

It is likely that a recent update to the Defender definition database introduced an overly aggressive heuristic rule that misinterpreted how DigiCert’s utilities handle cryptographic operations. Because these engines are deployed across millions of endpoints, a single flawed update can cause widespread disruption. This highlights the trade-off in modern security: while automation allows for the rapid detection of zero-day exploits, it also introduces the risk of catastrophic, system-wide misfires.

The Ripple Effect on Enterprise Operations

The operational impact extends beyond quarantined files. Many organizations rely on these DigiCert utilities for Automated Certificate Management Environment (ACME) workflows. When Defender quarantined these binaries, it disrupted the automated renewal of SSL/TLS certificates. If left uncorrected, this failure would lead to expired certificates, resulting in inaccessible websites and broken internal communications. The irony of the situation is clear: in an effort to secure the network perimeter, the security software compromised the identity layer of the infrastructure.

IT administrators are currently calling for Microsoft to provide a global whitelist or a simplified rollback mechanism that avoids the need for manual intervention on every workstation. While Microsoft works on a resolution, the incident serves as a case study for the necessity of defense-in-depth strategies. Relying on a single vendor for an entire security stack creates a centralized point of failure. As the industry awaits official patches, professionals are re-evaluating how to maintain oversight when automated security tools malfunction.

Heuristics vs. Deterministic Security: The Trust Paradox

The core of this incident is the tension between deterministic security, which relies on known-good digital signatures, and heuristic analysis, which predicts intent based on code patterns. Most modern EDR solutions prioritize machine learning to identify threats that lack a known signature.

When a certificate management tool performs actions like modifying system stores or communicating with external servers, these are objectively suspicious if performed by an unknown binary. However, when these actions are executed by a signed, reputable utility from a root CA, the system should prioritize signature verification. The failure here suggests that the engine’s “trust” weighting was overridden by a hyper-sensitive detection threshold. This creates a paradox: as security tools become more aggressive to catch elusive threats, they become more likely to interfere with the infrastructure they are intended to protect.

| Mechanism | Role in False Positives | Mitigation Strategy |

|---|---|---|

| Heuristic Analysis | Identifies patterns; prone to over-sensitivity. | Behavioral baseline tuning and sandboxing. |

| Signature Verification | Validates file provenance; generally reliable. | Strict enforcement of CA-backed trust chains. |

| Cloud Intelligence | Real-time telemetry; can propagate errors globally. | Phased rollout and canary testing for updates. |

The Cascading Impact on Infrastructure-as-Code

For DevOps teams, the impact is not limited to workstations; it extends to CI/CD pipelines. When an automated build server identifies its certificate signing tools as threats, the entire software delivery lifecycle is disrupted. This leads to broken deployments and failed testing environments. The reliance on cloud-managed security agents means that remediation is not as simple as a reboot; it requires clearing caches and manually whitelisting binaries across large-scale environments.

In highly regulated industries, the inability to verify the integrity of security tools during an incident creates a compliance crisis. Organizations are forced to decide between disabling security controls or accepting significant downtime, both of which introduce unacceptable levels of risk.

Moving Toward Resilient Verification

To prevent future incidents, the industry should prioritize provenance-based filtering. Security vendors need to implement a “trust-the-signer” hierarchy that is more reliable than behavioral analysis for high-reputation software. If a binary is signed by a root CA already present in the Windows Trusted Root Certification Authorities store, the heuristic engine should require a higher threshold of suspicious activity before initiating a quarantine.

This event serves as a reminder that enterprise tools require a contingency plan. Relying on one vendor for both the operating system and the security layer creates a single point of failure. Diversifying the security stack, while increasing complexity, provides essential insurance against global misconfiguration events.

Ultimately, the DigiCert-Defender incident highlights the fragility of current digital trust models. We rely on a complex, automated system where a single mislabeled update can disrupt critical infrastructure. As we continue to adopt AI-driven security, we must ensure these tools remain transparent and verifiable. The objective of security is to enable business operations, not to become an obstacle to them. Until the gap between aggressive heuristic detection and reliable identity verification is bridged, these disruptions will remain a significant risk to the modern enterprise.

Official Resources and Further Information: